Casalux Ambiente AFL-1 WLED Upgrade

The Casalux Ambiente AFL-1 floor lamp, sold by Aldi/Hofer for ~€18, is a great base for smartification with a simple WLED upgrade. Here’s a teardown and upgrade guide.

The Casalux Ambiente AFL-1 floor lamp, sold by Aldi/Hofer for ~€18, is a great base for smartification with a simple WLED upgrade. Here’s a teardown and upgrade guide.

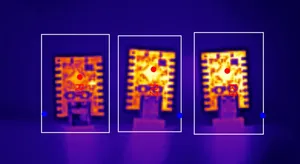

I recently upgraded an RGBW floor lamp with an ESP board running WLED — and almost burned my fingers. Since the board had to fit inside a small, closed case, I started wondering: how hot do different ESP boards get when running WLED?

Aurio version 4, released yesterday, is a significant update that brings a host of modernizations and improvements. It is now available in the NuGet Gallery, features an updated build setup, improved cross-platform support, FFmpeg compatibility on Linux, new functionalities, optimizations, and numerous bug fixes.

What’s better than an RGB party spotlight? An RGB party spotlight with the great LED controller firmware WLED! This is a guide how to easily upgrade the USB PartyPar 6 RGB light by Fun Generation.

The cheapest fog machines typically come with a wired remote control with an indicator light and a button. The indicator light signals when the machine is at its operating temperature and ready to emit fog, and the button makes it emit fog while pressed. However, this turned out inconvenient once I found myself repeatedly running to the machine to trigger it. And even if it were wireless, it would still need manual handling. So this calls for automation, and it’s quite easily possible without DIY electronics, with a Wi-Fi Shelly switch and a relay. Read on for a demo video, build instructions, and a Home Assistant automation.

A smart home sometimes needs to notify its users of certain events or states. Instead of installing dedicated notification devices, why not use the smart lights which are present in most smart homes anyway? This turned out to be much more difficult than it sounds. However, I found that WLED, a great firmware for LED light strip controllers, offers an interface almost predestined to implement visual notification effects, and so I wrote and published a Node-RED node which does exactly that. Visual notification effects, simple to use, without side effects. It’s open source and now available on GitHub, npm, and the Node-RED library. Watch the demo video and read on for more details.

In this talk at the Demuxed 2022 conference for video engineers, I explain how my team and I transformed the testing process of the Bitmovin Video Player and scaled test executions from hundreds to millions.

Two years ago I was approached by someone from a public TV broadcaster in Germany with the following problem: Given multiple video files with differently cut versions of the same production, is it possible to use the technology from AudioAlign/Aurio to automatically generate edit decision lists (EDL/XML) and use them to transfer subtitles from a reference version to the different cuts? The answer is “yes”, and that’s just one of many use-cases. This article describes the challenges and how Aurio solves them almost magically in a successful prototype developed for the TV station.

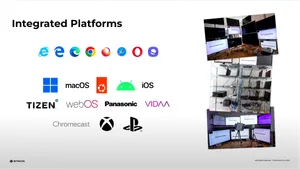

A collaboration with eyecandylab, a company developing products for augmenting TV programs, recently gave me the opportunity to implement great new features into Aurio. The most recent version released today extends the architecture to support processing of real-time audio streams with infinite lengths, which means that live streams can now be fingerprinted on the fly with minimal latency. Additionally, the Aurio core library has been ported to .NET Standard 2.0 and will run with the .NET Core 2.0 framework on Windows, Linux and MacOS, enabling building microservices in containerized environments like Docker.

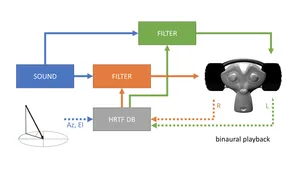

This October I talked about spherical audio for 360°/VR videos at Demuxed, a conference for video engineers. I explain the principles of the Ambisonics surround sound technique from recording to playback through a speaker array or headphones with binaural playback, and how it can be used in web applications and video players through the Web Audio API.